There is great misunderstanding regarding AI, artificial intelligence. At least presently. Because all AI is and can do right now is collect all the input data provided by humans, sort through it all at blindingly fast speeds, and spit out the majority view of the rubbish sitting in bits & bytes in servers worldwide.

This will change as technology rapidly advances beyond the ability of mankind to manage it.

Anyone thinking that human beings are inherently good, that righteousness will overcome and prevail prior to the LORD returning a Second and Final Time, is sorely deluded. Living in a fantasy.

The so-called information available to people via every electronic device and glowing screen is only going to become more and more corrupted, more and more biased towards promoting lawlessness, evil, unrighteousness, wickedness, and sin. NOT going to go in the direction of pursuing God and His ways, His words, His Son, the LORD Jesus Christ, Yeshua Hamashiac.

Machines are only shaping what is known, believed, and trusted because of what inherently evil, unrighteous, and sinful men and women are feeding those machines.

To the degree that when the Antichrist appears [my wife and I, along with all truly born again faithful and obedient believers in Jesus Christ, won’t be here to see this], along with his false prophet, will have built an image, one that breathes as if alive, speaks as if living, and can see those who have the mark of the beast and those who do not. This will likely be AI so advanced at that point, along with technology worldwide for personal electronic devices, that the image of the beast that appears to live will be able to scan foreheads and hands to see if the name of the Beast/Antichrist is branded, tattooed into their flesh, and single out those who do not have the mark.

That is coming.

Read on…

Ken Pullen, Monday, March 23rd, 2026

AI Bias In Action: When Machines Quietly Shape What We Trust

March 23, 2026

By PNW Staff

Reprinted from Prophecy News Watch

A troubling reminder surfaced this week that artificial intelligence is not the neutral referee many assume it to be–it is, in fact, a reflection of human decisions, human data, and human blind spots.

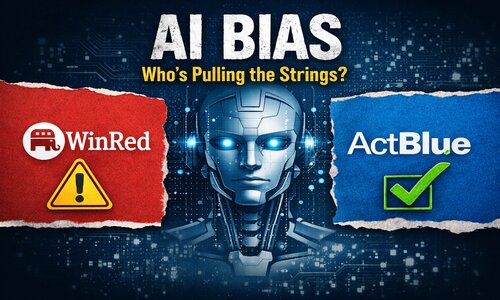

According to New York Post, a “technical error” led OpenAI’s ChatGPT to flag links to WinRed, the official Republican Party donation platform, as potentially unsafe, while links to ActBlue, the primary Democratic campaign fundraising platform, did not generate similar warnings.

The company has said this was not intentional. But whether accidental or not, the incident exposes something deeper–and more concerning–than a simple glitch. It reveals how fragile trust in AI can be, and how easily bias–subtle or overt–can creep into systems that millions rely on for information.

This is not just about politics. It is about power: who shapes the tools, who feeds them data, and who decides what is seen–or unseen.

1. Bias in Source Data

At the foundation of every AI system is data. Massive amounts of it. But data is never neutral–it reflects the world it was gathered from. If the majority of training data leans toward certain viewpoints, narratives, or cultural assumptions, the AI will naturally echo those patterns.

If one political perspective dominates news articles, academic research, or online discourse within the dataset, the AI may unknowingly prioritize or validate that perspective more often. Over time, this creates an uneven playing field–not because the AI “chooses” sides, but because it learned from an imbalanced source.

The WinRed vs. ActBlue incident raises an important question: what kinds of signals in the training data might influence how risk or trust is assessed?

2. Bias in Filters and Safety Systems

AI models are not just trained–they are constrained. Filters and safety layers are added to prevent harm, misinformation, or malicious use. But these filters are designed by humans, and humans carry assumptions.

What qualifies as “unsafe”? What gets flagged? What is allowed through?

In this case, one fundraising platform triggered warnings while another did not. Even if caused by a technical error, it highlights how filtering systems can produce uneven results. A slight difference in how rules are applied–or interpreted–can create the appearance of bias, even when the intention is neutrality.

3. Algorithmic Bias

Beyond data and filters lies the algorithm itself–the mathematical framework that determines how information is processed and prioritized.

Algorithms are built with objectives: maximize relevance, reduce harm, increase engagement, ensure accuracy. But how those goals are weighted matters.

If an algorithm is tuned to be overly cautious in certain contexts, it may flag content more aggressively in one area than another. If it is tuned differently elsewhere, the outcome changes. These are not random outcomes–they are the result of design decisions.

The public rarely sees these decisions, yet they shape what billions of people experience.

4. The Power of Omission: What’s Left Out

Perhaps the most overlooked form of bias is not what is shown–but what is missing.

AI doesn’t just present information; it selects it. It summarizes, ranks, and filters. In doing so, it inevitably leaves things out.

What if warnings are shown for one type of link but not another? What if certain perspectives are simply absent from the results? The user may never know what they weren’t shown.

This “silent bias” can be more powerful than overt bias, because it operates invisibly. People trust what they see, rarely questioning what has been excluded.

5. Who Decides the Data and Design?

At the center of all of this is a critical question: who decides?

Who selects the training data? Who defines the safety rules? Who tunes the algorithms?

These decisions are made by teams–often well-intentioned–but still limited by their own experiences, perspectives, and institutional cultures. Even without malicious intent, unconscious bias can influence outcomes.

The incident reported by the New York Post underscores why transparency matters. When something goes wrong, the explanation often points to a “technical issue.” But behind every technical system are human choices.

A Necessary Warning for the Future

Artificial intelligence is rapidly becoming a gatekeeper of information. It helps people decide what to read, what to believe, and even what to trust.

That power demands scrutiny.

The lesson here is not to reject AI–but to approach it with discernment. Users must remain aware that these systems are not infallible. They are tools, shaped by imperfect inputs and imperfect design.

Moments like this should not be dismissed as minor glitches. They should serve as wake-up calls.

Because if something as simple as a fundraising link can be unevenly flagged, what else might be quietly influenced beneath the surface?

And perhaps the most important question of all: if we do not question the systems guiding us, who will?

RELATED:

A Digital Tower Of Babel: Artificial Intelligence And Four Millennia Of Human Pride

Leave A Comment

You must be logged in to post a comment.